ChatOBD2

AI-native automotive diagnostics product, designed and engineered solo

- 3 Product surfaces shipped

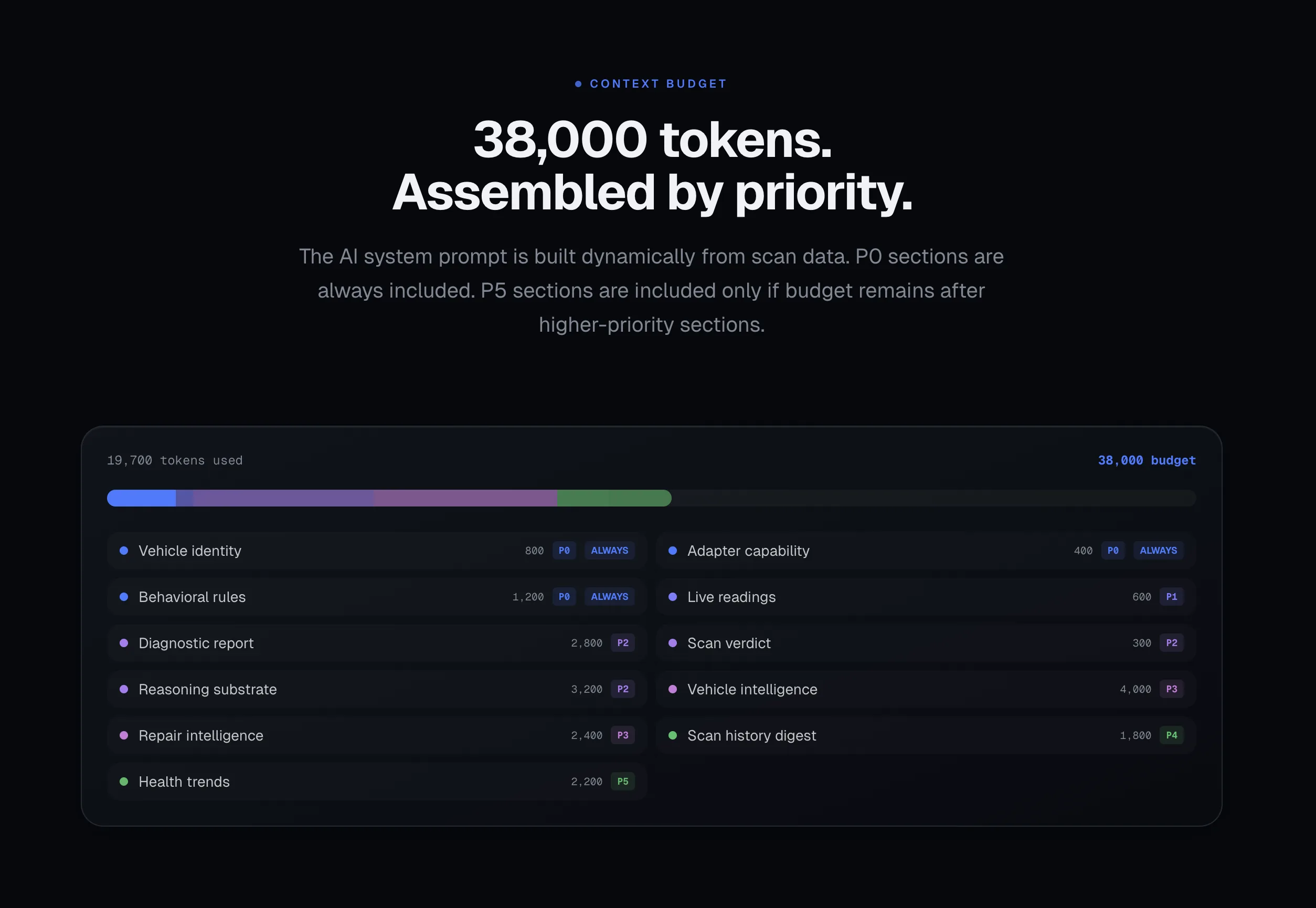

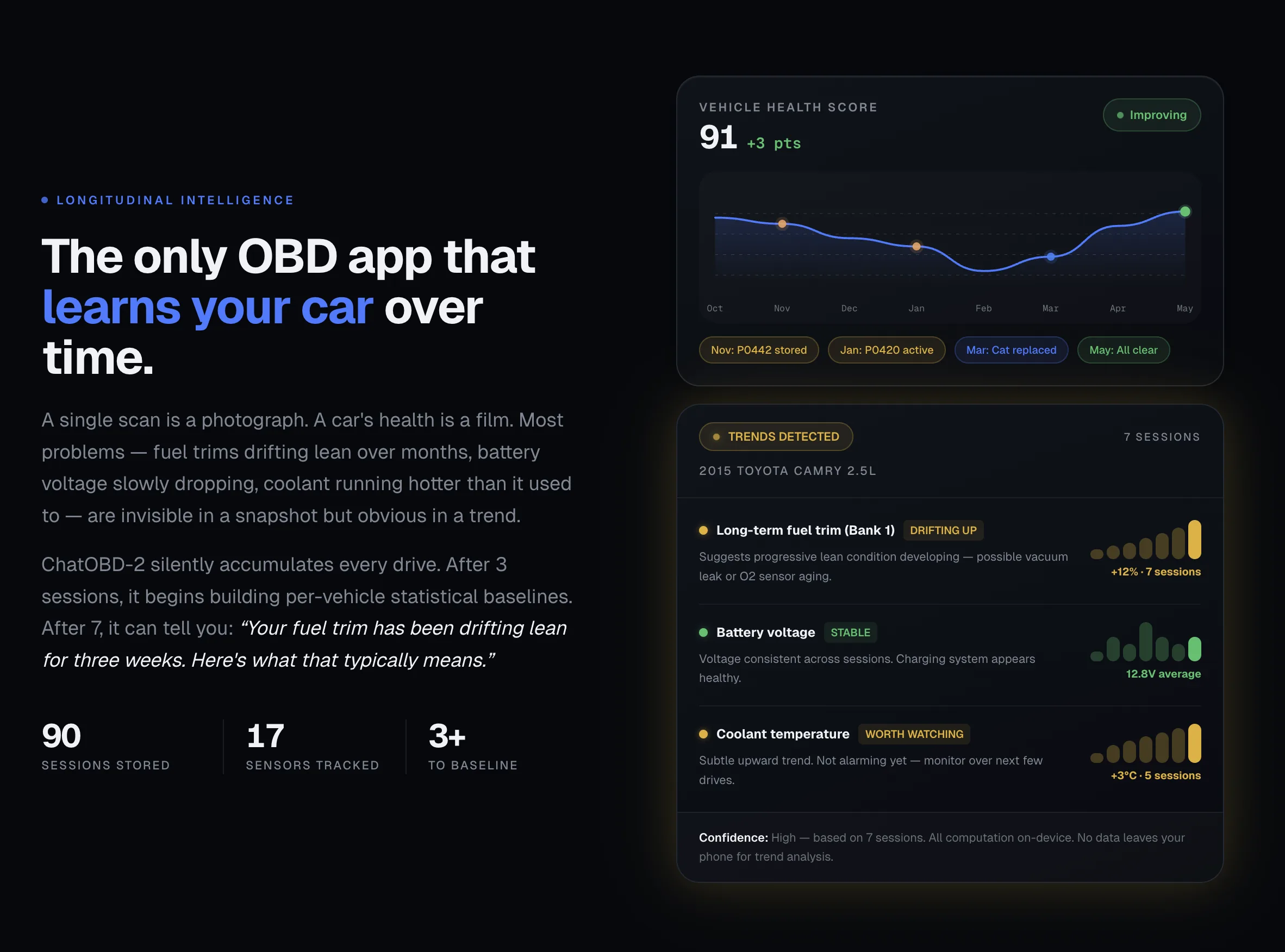

- 38k Token budget per scan

- 6 Reasoning layers before model call

- One person Designed and built by

The premise

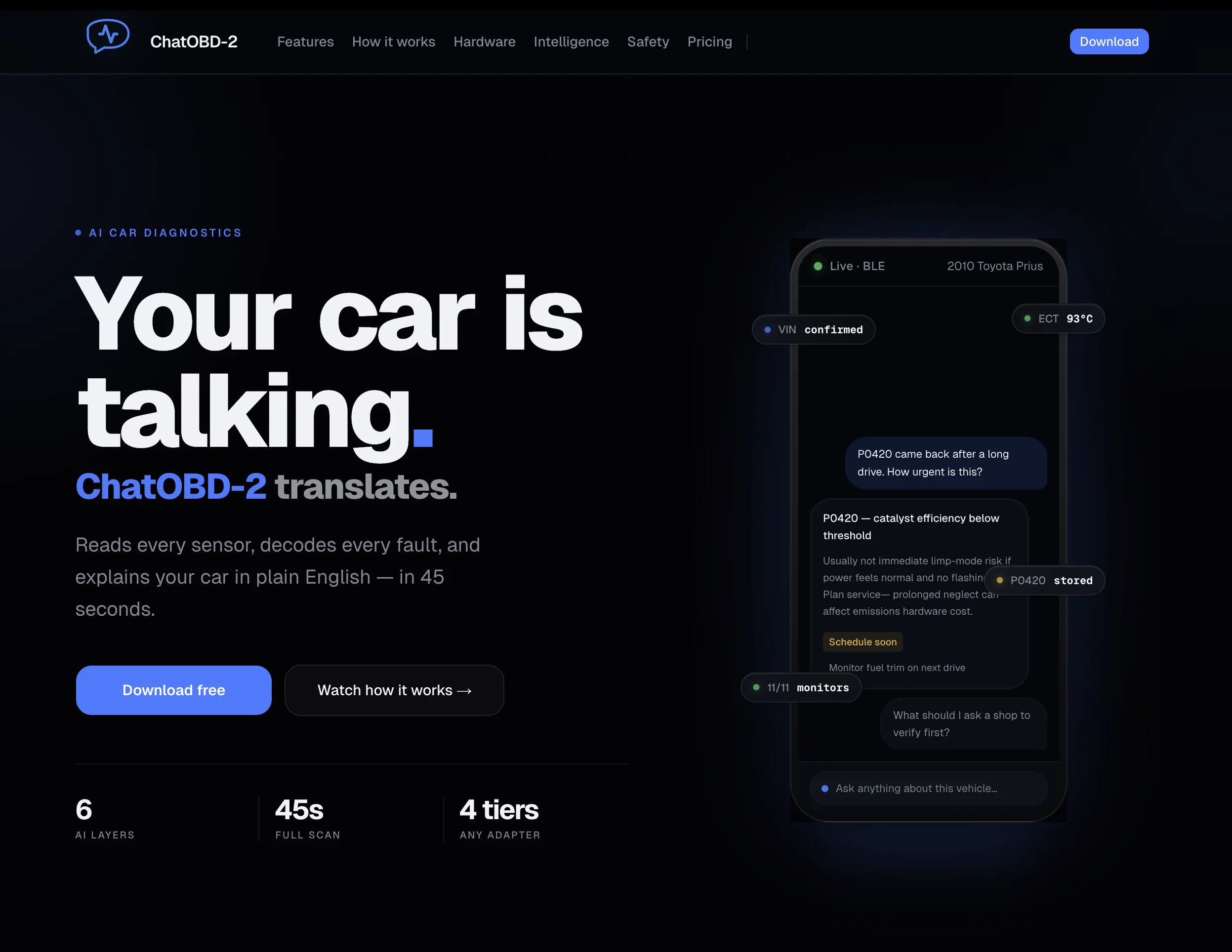

Diagnostic apps for cars are written by engineers for engineers. Codes, freeze frames, partial explanations, all surfaced raw. The driver staring at a screen is supposed to take all of that and produce a decision: drive it, don’t drive it, fix it now, fix it later.

The actual problem isn’t visualization. It’s translation. The data is technically present; the user is left to assemble it into an answer.

A different idea

ChatOBD2 closes the gap by deciding for them.

The interface is intentionally simple. The system behind it isn’t. Structured diagnostic inputs feed into layered interpretation, then into a constrained model call, then into a constrained output. Every scan returns the same shape: is it safe to drive, how confident is the answer, what matters most, what to do next.

The model is one stage in a structured pipeline. Its job is reducing complexity, not generating prose.

Three decisions that shaped the product

The verdict comes first, before any text. When a scan finishes, the safe-to-drive answer renders first. Full-width, color-coded, ahead of every paragraph. The tradeoff is less narrative warmth. Anyone glancing at the screen has the answer they came for in under a second.

Confidence is part of the answer. A partial scan changes what the verdict means. Showing scan quality as a fixed bar instead of hiding it costs a small piece of optimism and earns a much larger piece of trust.

Prompts live in the system, not in components. When prompts get scattered across components, every copy change is a hunt across files and the analytics taxonomy quietly drifts behind it. Pulling them into one module, keyed by verdict tier and code context, means a single change updates the whole product, and the analytics stay coherent across it.

The shape of the system

Chat surface as the home screen. Verdict card as the unit of output. The model held as one structured stage between deterministic input and constrained output.

The interface stops asking the driver to interpret data and starts telling them what to do next.

This is the moment the system stops exposing complexity and starts resolving it.

From data to decisionWhat a scan returns

Run a scan. Anything I should worry about?

One pending code, no immediate action

P0420 (catalyst efficiency) is logged but not active. Drivetrain pulled clean across two cycles. Re-scan in 200 mi.

- P0420 Catalyst efficiency below threshold

- 12 systems Healthy across drivetrain & emissions

Data becomes understanding

Same premise, different format.

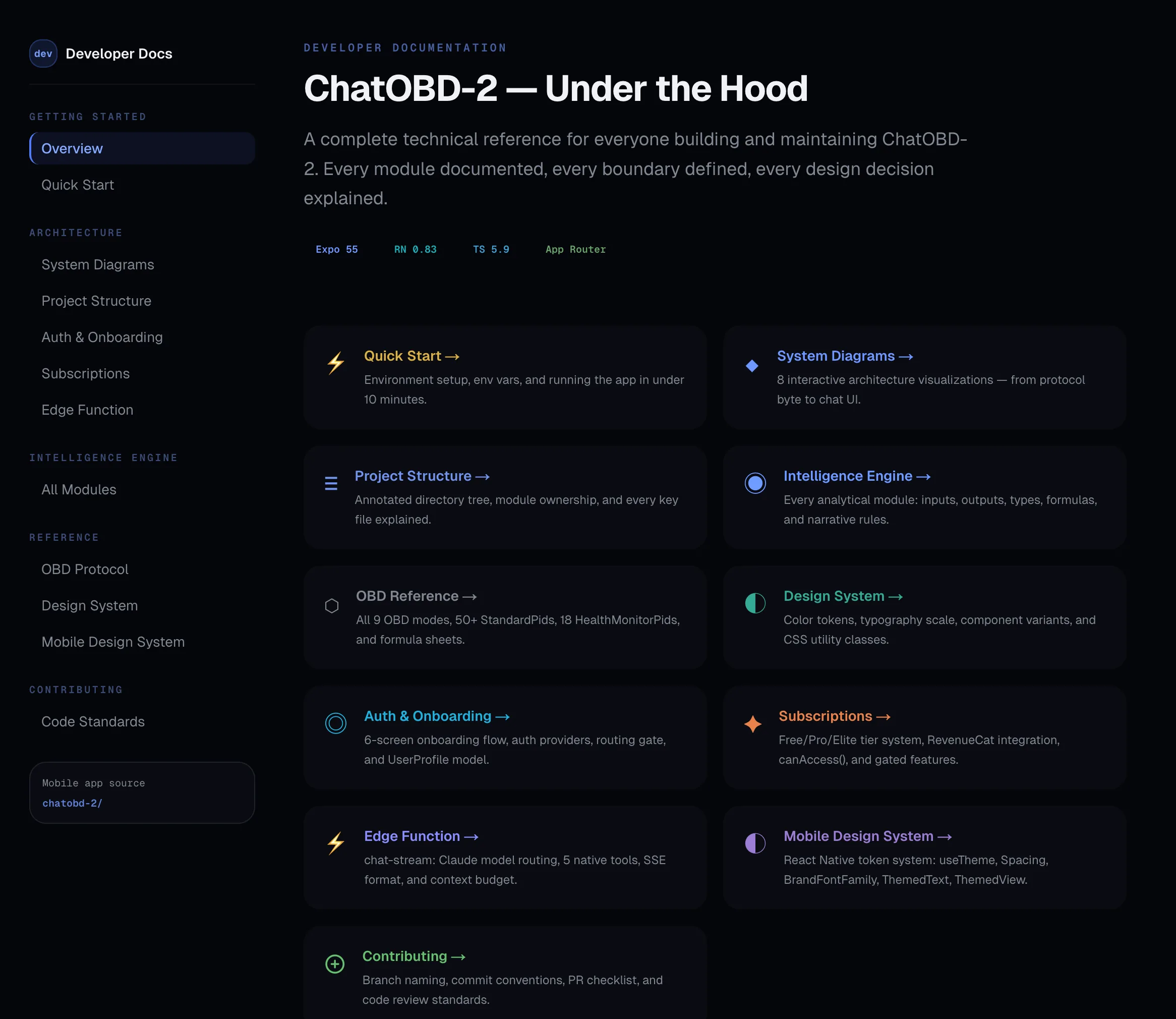

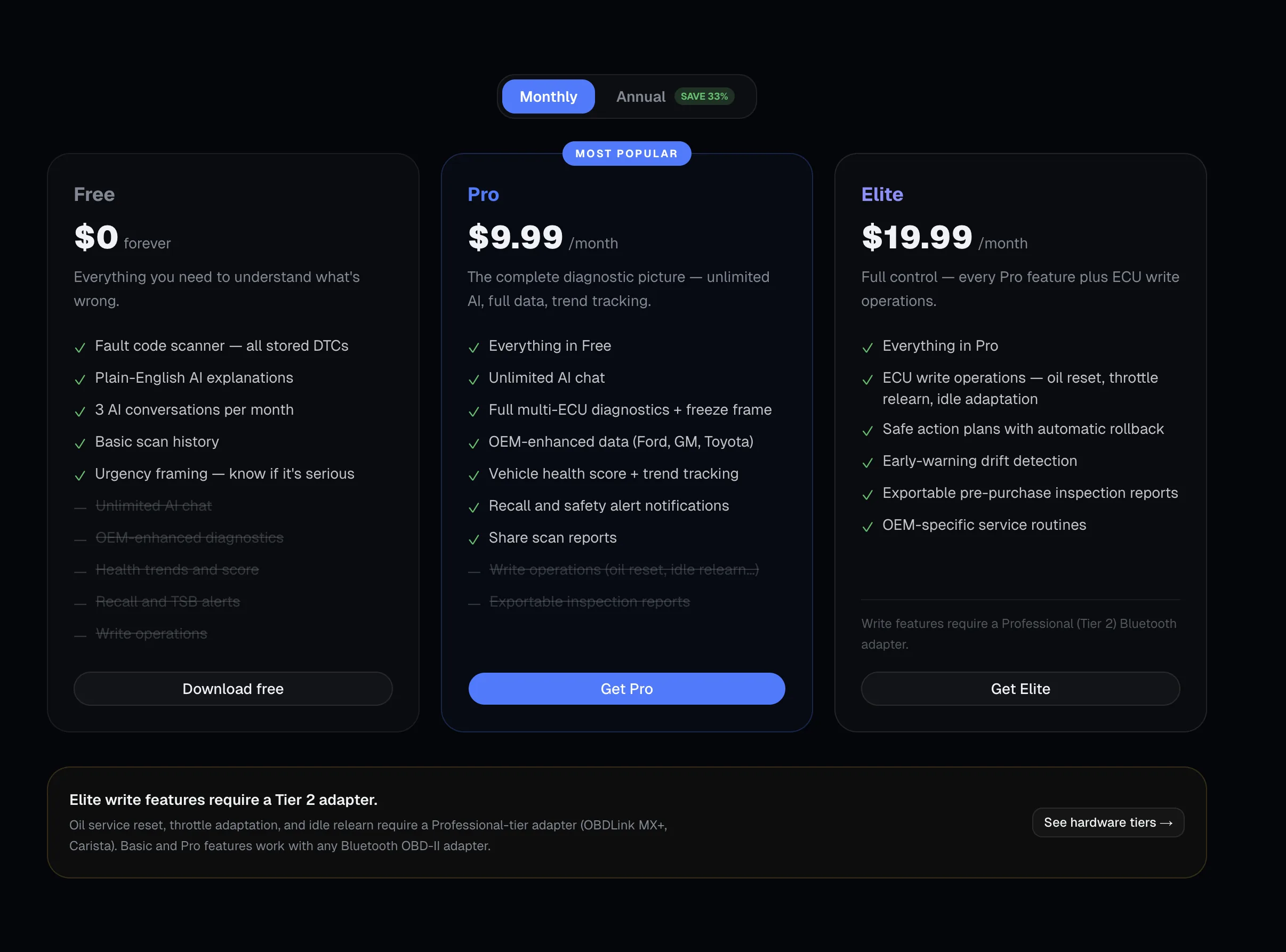

The marketing site carries the same product premise into a public-facing surface. Same "verdict comes first" instinct, same constraint thinking. Designed and built end to end. Visual exploration where it helped, hand-written code everywhere else.

The system underneath the surface.

Six deterministic reasoning layers run before every model call. The system prompt is composed per scan from a 38,000-token budget, with P0 sections always making the cut and lower-priority sections only landing if budget remains. BLE adapter integration. Write operations with rollback. The dev surface is treated as a designed product, not a wiki. Same care as the user-facing app.