How I work.

The short version. The long version is the rest of this page.

A lot of the work I take on looks like repair. A platform that grew without a system. A site that ranks well and converts badly. A frontend that looks fine in Chrome and falls apart on iOS 15. Existing AI tooling that needs a real home in the codebase. Greenfield happens, but most of the work I do isn't that.

I'm a senior generalist. UX, frontend, design systems, content architecture, SEO, performance, accessibility, AI-assisted prototyping. Whichever subset the work needs, on whatever platform the company runs on. The skills sit in one head, so the tradeoffs between them get made in one head.

A normal week

Concretely, the recurring work in any given week looks something like this.

- Modify a Razor partial that's used in fifty places, knowing the typed ViewModel will surface every consumer that needs to update.

- Argue for a slower header hero against the "but the carousel is what marketing wants" reflex, and sequence the migration so the analytics taxonomy doesn't go dark.

- Open a hand-authored CSS file, find the spacing token that's almost right but not quite, decide whether to add a new token or stretch the existing one. Most of the time it's stretch.

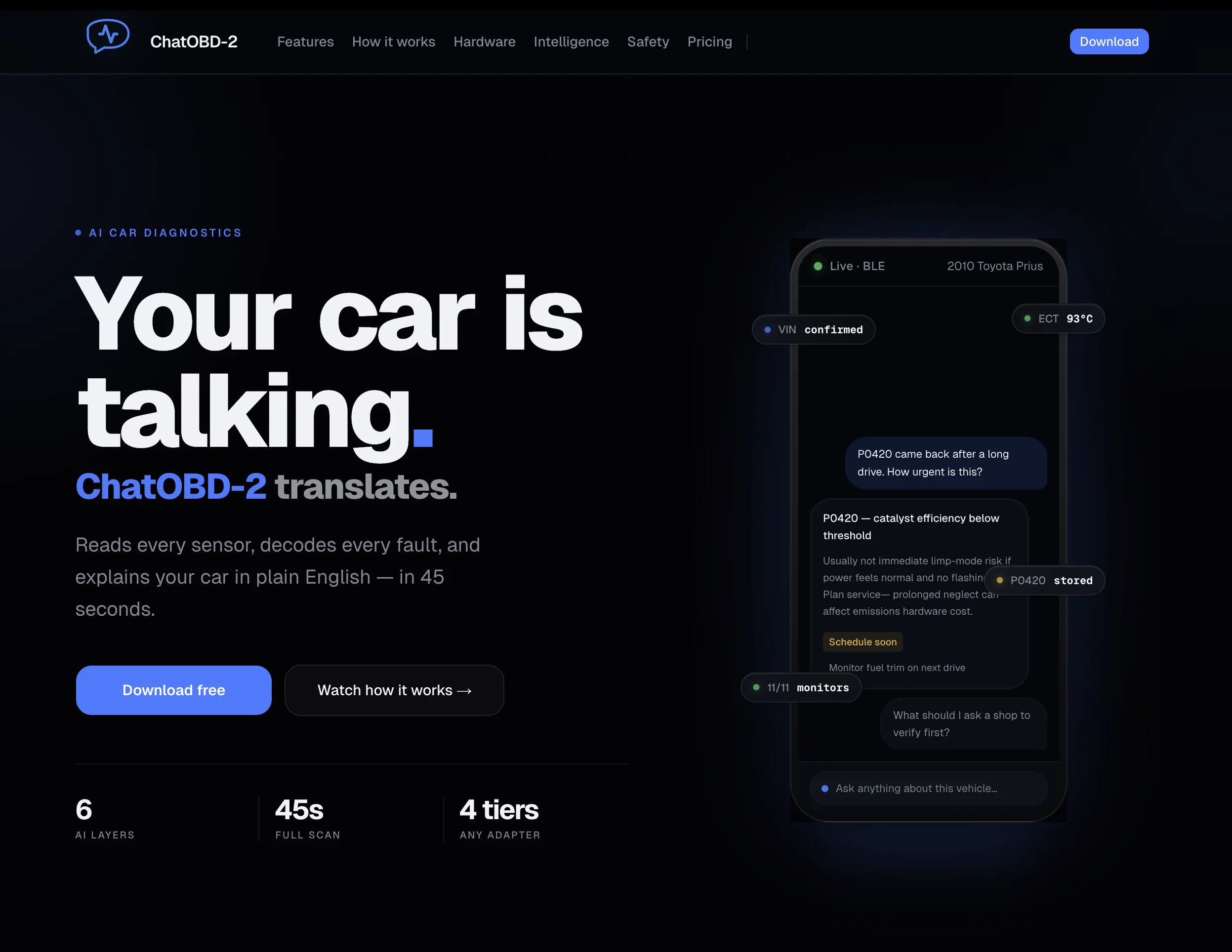

- Write a prompt module for ChatOBD2 that lives in the system, not in a component. Version it. Budget it inside the 38,000-token system prompt assembly.

- Read a Figma file someone handed off. Notice the spacing they almost got right. Translate it into production at fidelity, while quietly fixing the spacing.

- Run Lighthouse, watch the LCP regress when a marketing team drops a hero image, find a workable compromise that keeps both groups happy.

- Ship something into production that nobody but me will notice. Watch the bug board not light up.

How AI actually fits in

Two places: in the products I ship, and in the way I build them. The shape is the same in both: read the constraint, translate it into a prompt that lives in source, execute where the work fits best, ship reviewable output.

- 01 Constraint Read what already exists

- 02 Interrogate Use the model to surface gaps in the spec

- 03 Prompt module Typed, versioned, in source

- 04 Execute Claude · Cursor · Claude Design · Figma

- 05 Review & ship Output in code or schema-bound

In the products. ChatOBD2 is the worked example. Vehicle data flows through six deterministic reasoning layers before any model call. The system prompt isn't a string; it's a module that assembles fragments by priority inside a 38,000-token budget, keyed by verdict tier and code context. The fragments are typed, versioned, and reviewed in pull requests like any other source. A copy change is a one-file edit. The analytics taxonomy stays coherent because every fragment is tagged. Outputs are schema-bound, which means the rendering layer doesn't apologize for the model.

The prompt practice. Prompts as production code. New behavior lands as a new fragment with a clear name. Failure modes are tested. Token budgets are tracked the way any other resource budget is. The result is AI you can keep iterating on, in the same review loop as the rest of the codebase.

What a scan returns

Run a scan. Anything I should worry about?

One pending code, no immediate action

P0420 (catalyst efficiency) is logged but not active. Drivetrain pulled clean across two cycles. Re-scan in 200 mi.

- P0420 Catalyst efficiency below threshold

- 12 systems Healthy across drivetrain & emissions

In the build. Claude and Cursor handle code work; the choice between them is contextual. Figma stays for precision and design-system maintenance. Claude Design comes in for visual exploration when a frame is faster to reason about than markup. The codebase is the source of truth across all of them.

AI in the build, not the product. The model is one stage in a structured pipeline. A human owns every decision that ships. The goal is repeatability.

What I'm best at on a team

- Owning the whole web experience for a team that doesn't have a department for it. One person carrying UX, the design system, the frontend, the content model, the SEO graph, and the performance budget.

- Modernizing a platform that can't be rewritten. Long-running stacks have opinions. I read those first, plan around them, and ship in increments that don't require a flag day.

- Building systems engineers actually use. Typed components. Tokens. Layout primitives. Real consumers from day one. No big-bang library that ships into a vacuum.

- Sitting between design and engineering and unblocking both. I read intent, not just specs. Most production breakage lives in the gap a handoff doesn't see.

- Building AI features as engineering. Prompt modules in source. Schema-bound outputs. Structured pipelines that hold up under traffic. ChatOBD2 is the worked example: six deterministic layers in front of every model call, prompts assembled per scan from a 38,000-token budget.

Tools I reach for

The default stack changes per project. The judgment about which tool to use for which job stays the same.

- Claude Code work and reasoning. The default for non-trivial refactors and anything that needs to think across more than one file.

- Cursor Local iteration. Same code work as Claude, different interaction model. Whichever is faster on the task at hand.

- Figma Precision design, component-system maintenance, handoff fidelity, and the occasional client review.

- Claude Design Visual exploration when a frame is faster to think in than code. Output lands back in the codebase.

Across the work

Different companies, different stacks, the same question underneath: how do I move this surface from where it is to where it should be without breaking what's already running.